You may be feeling a strange kind of pressure at work right now. Use AI. Move faster. Be efficient. Do not get it wrong. And somehow still be the one responsible for the judgment, the risk, and the outcome.

In many workplaces, people are being asked to use powerful tools in workflows that are still taking shape, often without a clear answer to a basic question: what exactly is the human responsible for here, and what exactly is AI supposed to do here?

When AI use goes poorly, we often focus on the tool. Was it inaccurate? Overconfident? Biased? Hallucinating? Those are real concerns, but often the bigger problem is that the collaboration itself was never clearly designed. The human role, AI role, and review process were fuzzy. And when those things are unclear, risks remain hidden, accountability gets blurry, and good judgment is hard to protect.

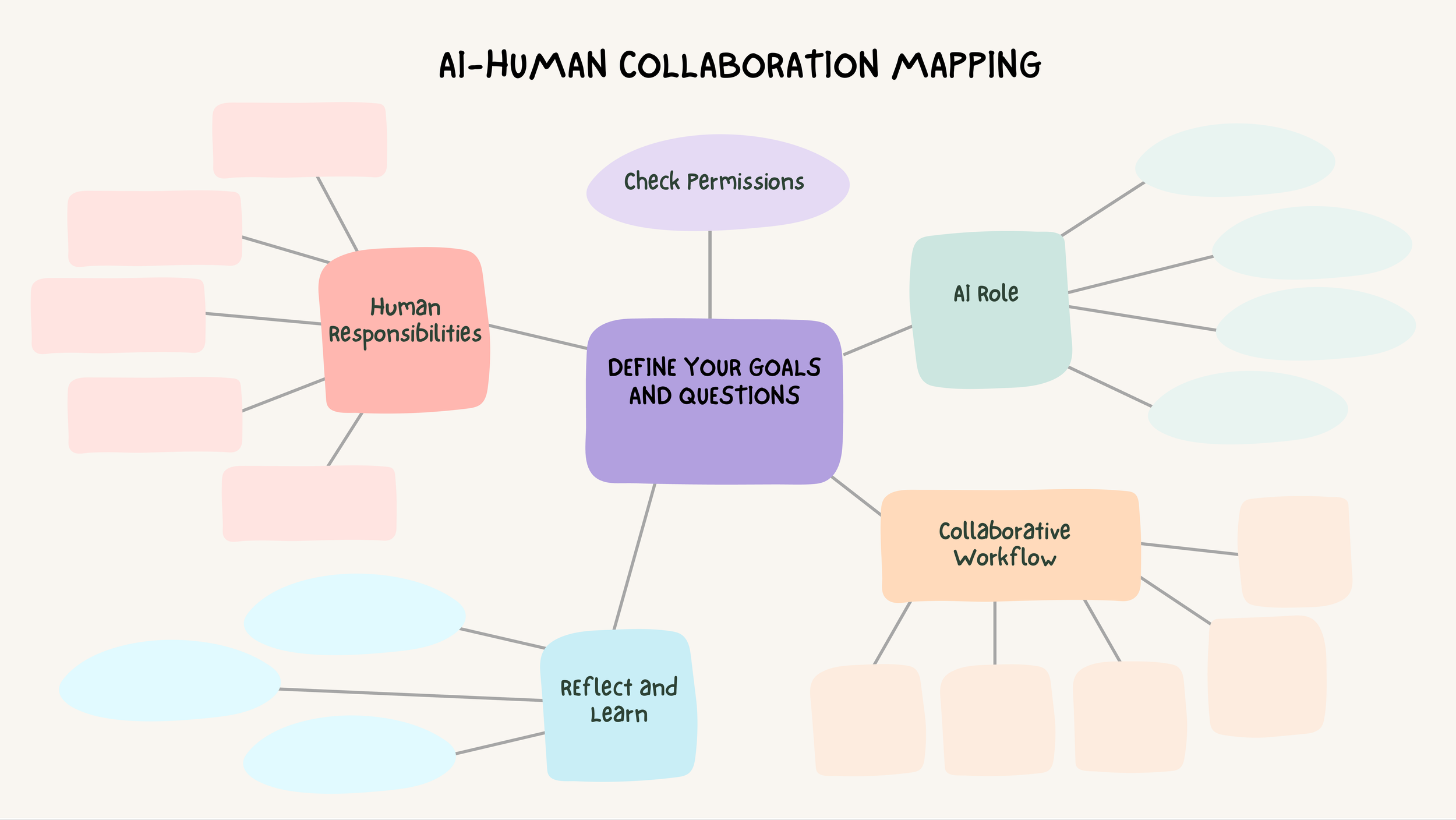

I have been working on a simple way to slow the process down before you delegate to AI: Collaboration Mapping.

Collaboration Mapping is a practical way to think through what the true objective, what is permitted, what the human owns, what AI is being asked to do, where review happens, and what uncertainty remains. These questions are easy to skip when people are under pressure and the tool seems impressively capable. But that is exactly when they matter most. Mapping does not need to be exhaustive. For routine, low-stakes tasks, a quick mental check may be enough. The fuller process is most valuable when the stakes are higher, the workflow is new, or the consequences of getting it wrong are hard to reverse.

Why this matters

AI can create real value. It can organize, summarize, draft, recommend, structure options, and speed up parts of a workflow. But speed is not the same as clarity. In many cases, AI can help most with production, while the human contribution lies in defining the purpose, supplying the context, interpreting ambiguity, making tradeoffs, reviewing for ethics and quality, and remaining accountable for the outcome. If that division of labor is not explicit, people can slide from AI is helping me think to AI is doing my thinking for me, and that shift may not be obvious in the moment. A polished draft can make weak thinking look stronger than it is. Mapping makes the collaboration more visible, more deliberate, and easier to manage.

Mapping does something else important. It helps people ask better questions about how the work should be done in the first place. Not just Can AI do this? but What part of this work actually requires human judgment? What should never be delegated? Where does AI create real efficiency, and where does it simply shift effort somewhere else? Better questions lead to better collaboration. Better collaboration makes it easier to protect human judgment while creating genuine efficiencies with AI.

What it means to map the collaboration

A good human-AI collaboration map asks six practical questions.

What is the work?

What is the goal? What question are we trying to answer? What is a good outcome?

What is permitted?

Can this task and this information appropriately go into this tool? Is this a public model, an enterprise system, an approved vendor, or something embedded in an internal workflow? What should never be entered, shared, or delegated here?

What remains a human responsibility?

What is at stake if this is wrong? What context and constraints must the human provide up front? What requires human interpretation, discretion, evaluation, ethics, or accountability?

What is AI specifically being asked to do?

Is it organizing? Summarizing? Recommending? Drafting? Executing? Influencing a judgment? Triggering action?

How will the workflow actually run?

Where does AI enter? Where are the pauses, handoffs, checkpoints, and escalation points? What part of the process does the human need to stay involved in, and why?

What did we learn?

What did AI improve? What still needed human judgment? What remained uncertain? How did the process change our thinking?

These questions help separate responsible use from vague use, and help teams see more clearly where AI is beneficial and where human involvement remains essential.

A simple example

Imagine a team preparing a board memo on whether to adopt an AI tool for internal compliance monitoring. Without mapping, the task might sound simple: use AI to help draft the memo. But what is the real goal? What materials can be used? What still requires human judgment? What exactly is AI being asked to do? Who reviews the output, and at what point?

A mapped version is much clearer. The goal is to prepare a board-ready recommendation. Permissions are limited to approved internal materials. The human role is to judge the stakes, define the decision, set the relevant risk tolerance, and own the recommendation. AI can help organize diligence notes, compare vendor options, summarize strengths and limitations, and draft a first pass. The workflow then includes explicit review by the human, legal or compliance, and senior leadership. This is a very different collaboration from simply saying, “Have AI help with the memo.” It is also much easier to defend.

The deeper shift

For many professionals, this is not just a workflow challenge. It feels personal and can raise uncomfortable questions about where their value lies. If AI is getting better at drafting, summarizing, and producing first passes, what remains distinctly human?

The value humans add may be shifting from production alone toward goal-setting, context, ambiguity, ethical judgment, tradeoffs, accountability, relationship management, and trust. That means teams must be more intentional about protecting the parts of the work that still depend on human judgment. Mapping helps make that value visible by clarifying what the human contributes and why it matters. In doing so, it also helps organizations capture AI’s efficiency gains more effectively.

Three practical takeaways

Before you delegate a task to AI this week, pause and ask:

What is the actual decision or outcome that matters here?

What needs to be decided, justified, or owned?What must remain a human responsibility?

Look for judgment, context, discretion, ethics, accountability, and relationship consequences.Where are the checkpoints?

Decide where review happens, who does it, and what they are checking for.

When done well, mapping helps people feel less overwhelmed, clearer about their human contribution, and more efficient in using AI where it creates real value.

Collaboration mapping works even better with a team. Seventeen Camels offers facilitated workshops designed to help organizations build clearer, more responsible AI workflows.